This post is a summary of the tricks I used to optimize the akarso.co cold start times, Akarso is open source so you can see how I did in detail if you want.

End result of this post

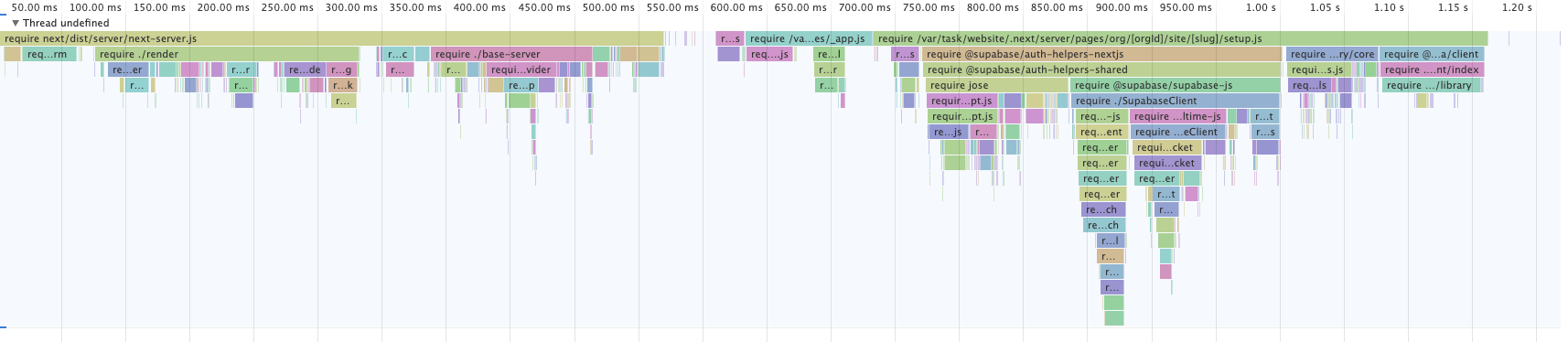

I managed to optimize Akarso Next.js app from 5+ seconds cold starts to about 1 second but there is a catch: these are the execution times (profiling starts only with the first line of code evaluated), there will be an additional period of time spent provisioning the function. In this example app provisioning time is about 1 second.

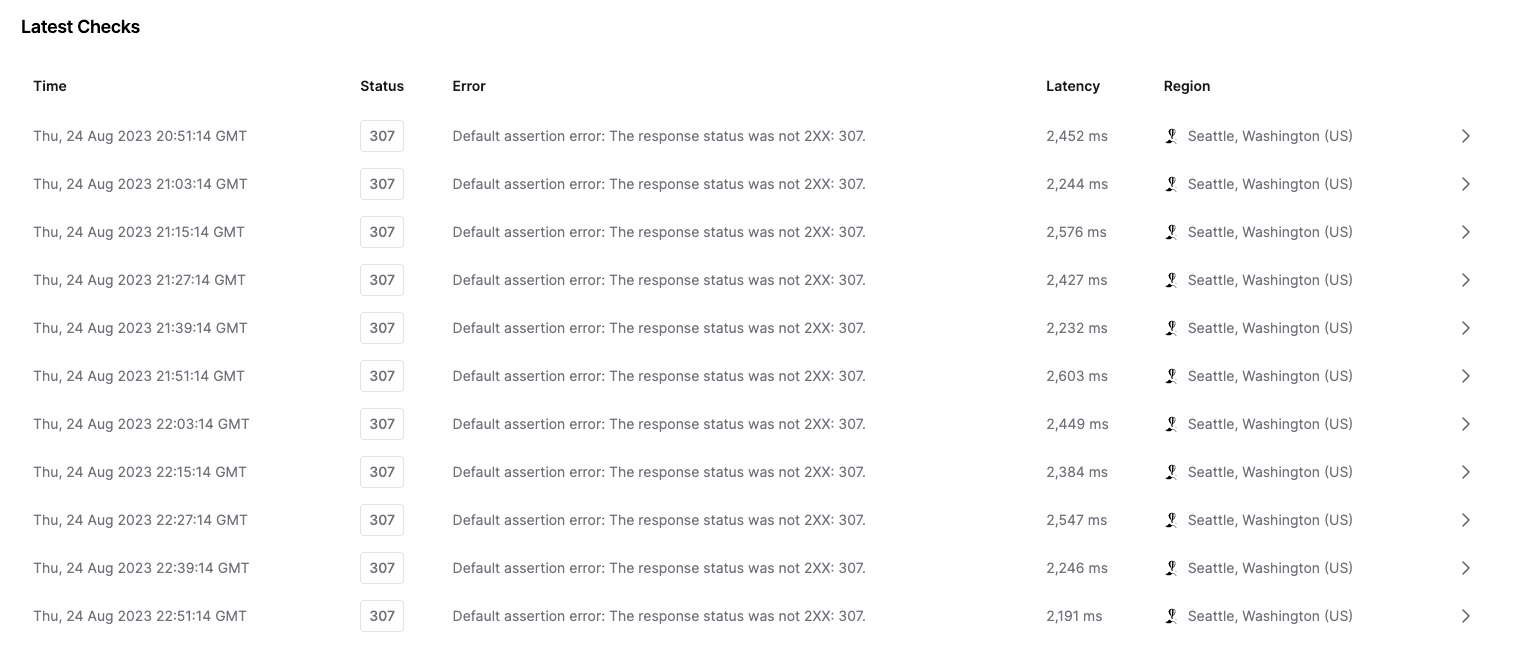

I measured the latency of the lambda every 10 minutes in the same region of the deployed code and as you can see the latency was about 2.3 seconds (while the execution time was about 1.2):

Import only what you need

It’s very easy to bloat your function size with code that is not useful. You should clearly separate code used by your pages. Vercel will only require files that are needed for your page. If you group your code in big

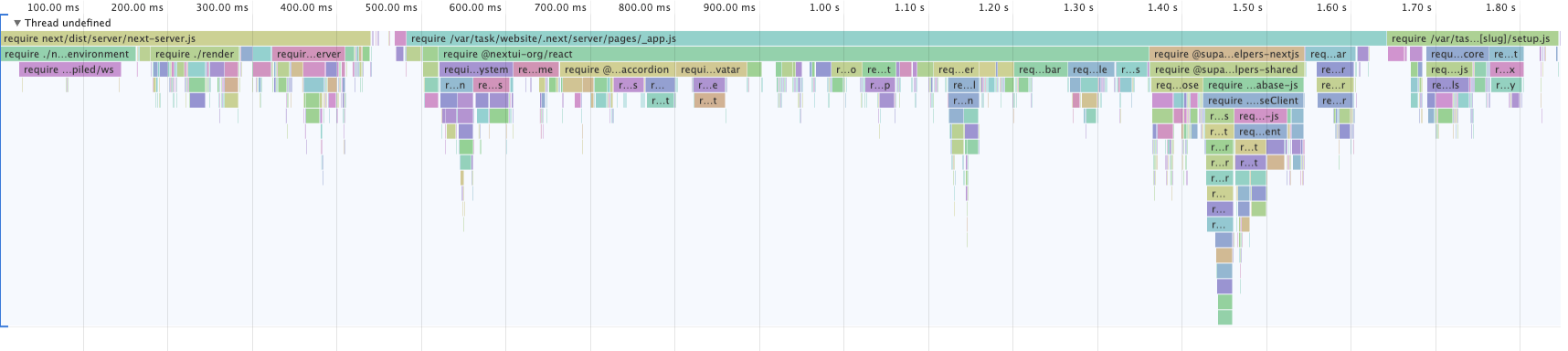

utils.js files your pages will end up very bloated.Here is an example profile with this issue, despite being an API handler this function is importing the

_app file, probably because that file is exporting some utility used by the API handler:

How to profile your functions cold starts

I developed a tool to make profiling Next.js cold starts super easy on Vercel.

To use it you just need to add a

vercel.json file in your Next.js folder (next to package.json) with the following contents:json{ "builds": [ { "src": "package.json", "use": "https://profile-next-cold-starts.vercel.app/builder.tgz" } ] }

After you redeploy the app you will be able to download a profile of your function appending a search param, for example:

plain texthttps://my-app.vercel.app/api/auth?vercel-profile-cpu // downloads a CPU profile https://my-app.vercel.app/api/auth?vercel-profile-require // downloads a profile of `require()`

The profiles only show the time spent since the lambda function starts executing javascript, there may be additional time spent loading your function by AWS.

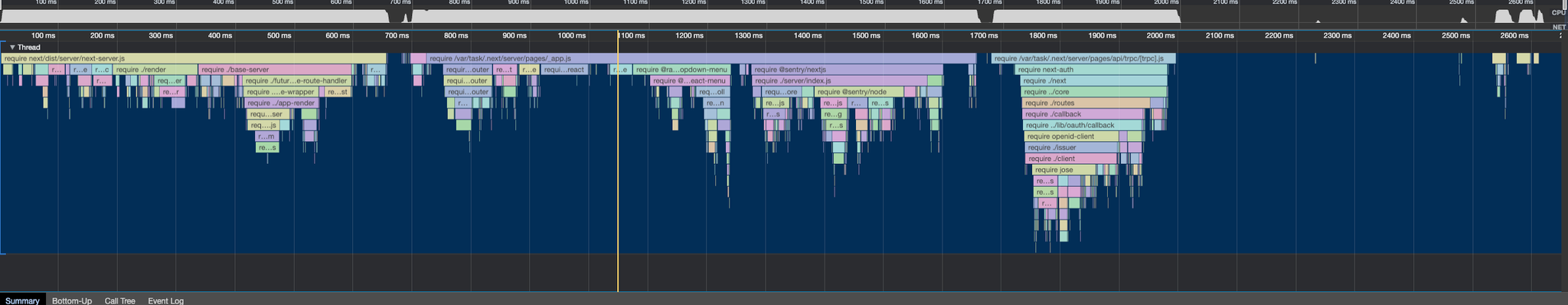

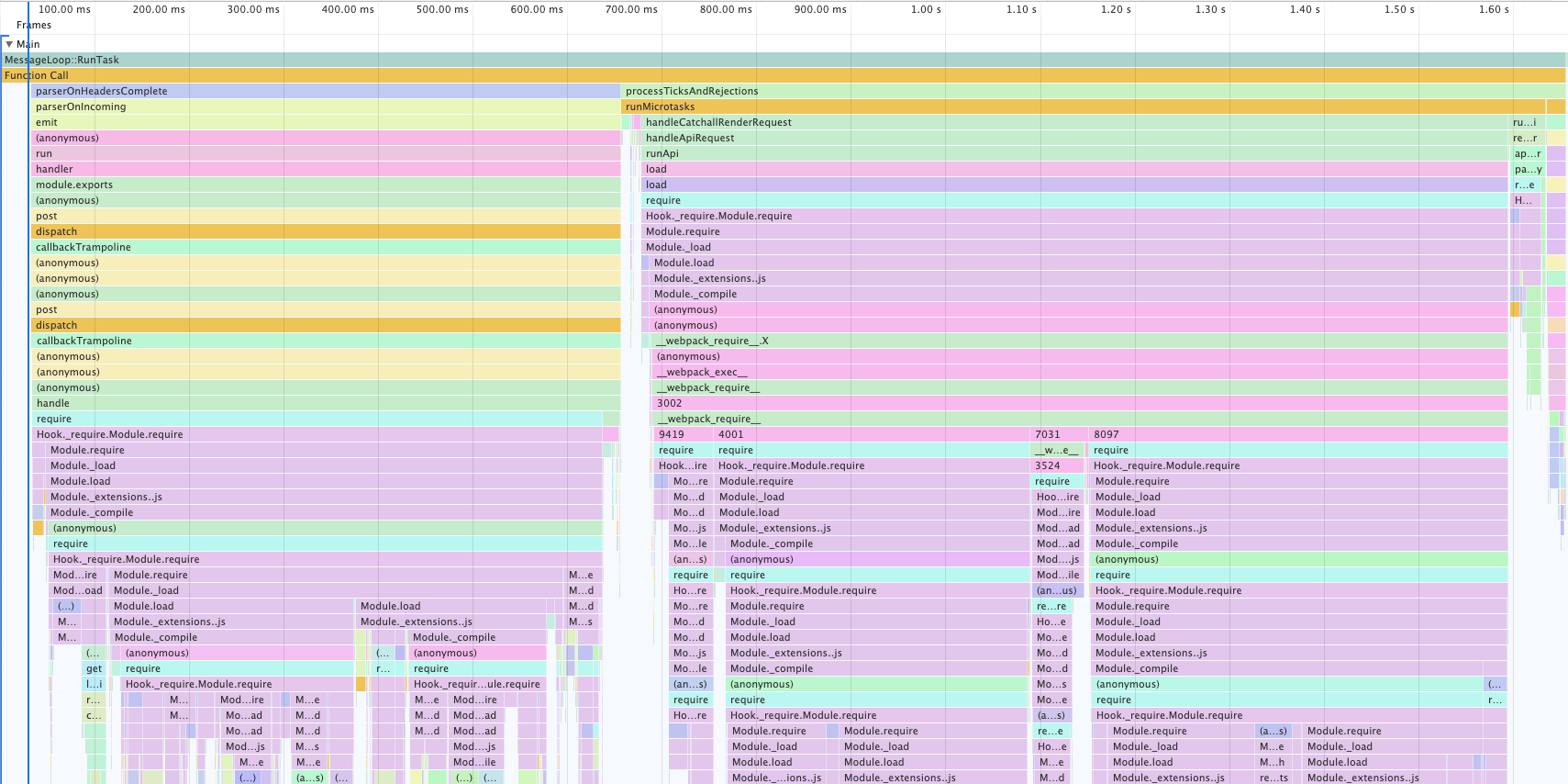

Node.js import is very slow

In the CPU flame chart below you can see that a big part of the cold start is spent resolving and loading es modules.

ES modules resolution is asynchronous, this means that importing many modules at the same times will fill the event loop very quickly, wasting CPU cycles taking off and putting tasks in the event loop.

This analysis has been confirmed by Vercel tech lead Javi Velasco, I am excited Vercel is focused on decreasing cold starts internally too!

Next.js cannot require these packages as usual because their

package.json contains "type": "module", forcing Next.js to import or bundle them.Use esmExternals: false

We can solve this problem by bundling the ESM dependencies and setting the

experimental.esmExternals option to false.javascriptconst nextConfig = { experimental: { esmExternals: false, }, };

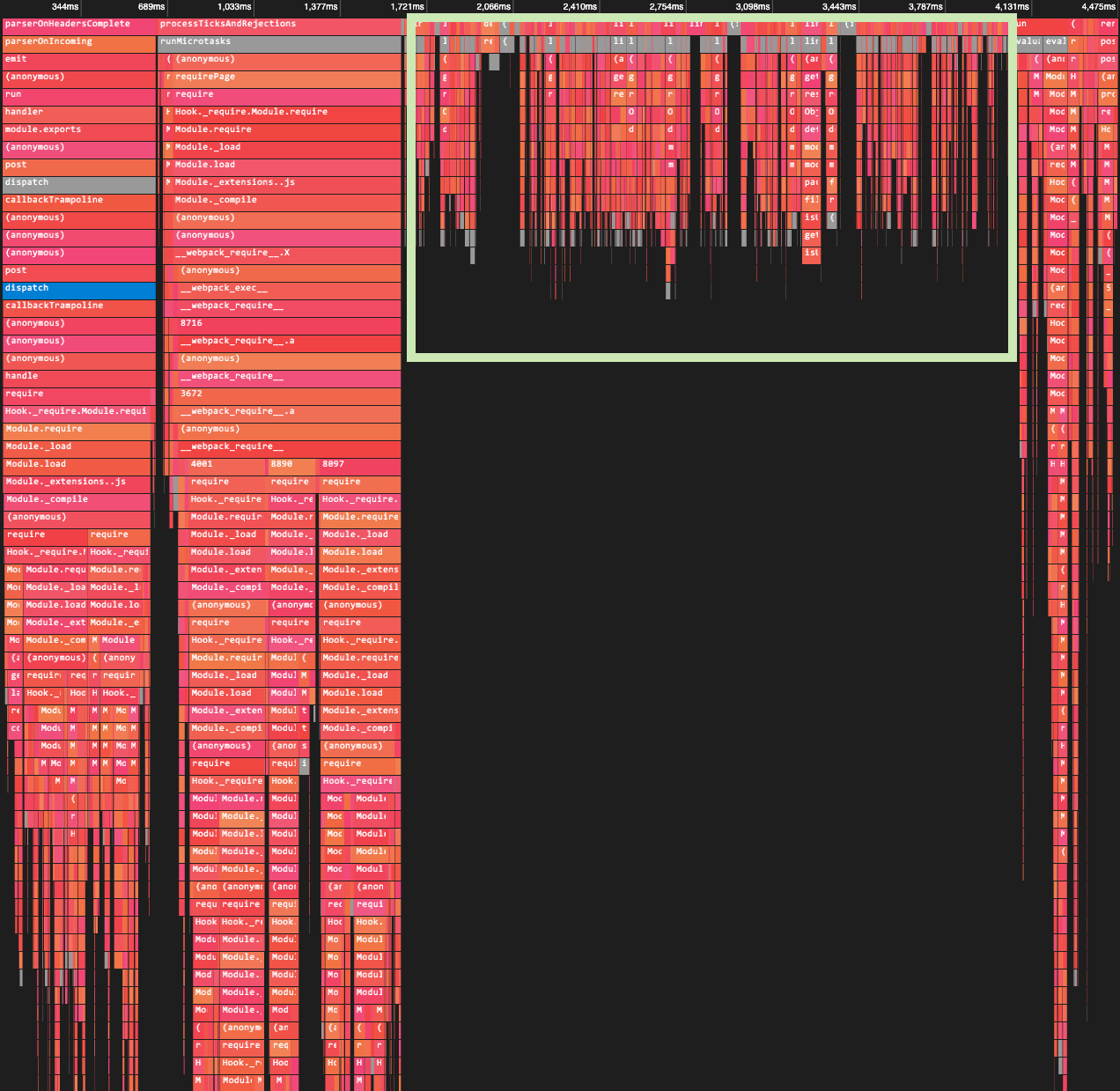

As you can see below, this change decreased the cold start from 4.5 seconds to 1.6. Now the majority of the time is spent on

require so that will be our next optimization.

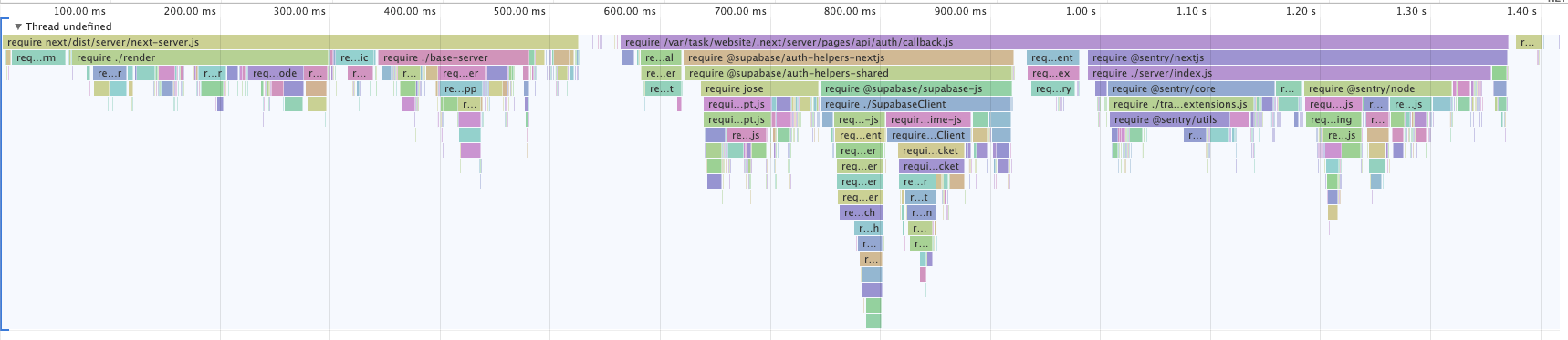

require is slow too

Passing

?vercel-profile-require with this tool I profiled what packages are the slowest to be loaded.

As you can see the whole cold start is caused by

require. Next itself is taking about 500ms but we can’t do anything about it for now so let’s focus on the rest.Just

@sentry/nextjs is taking 400ms to be required, that seems a lot for an error reporting library so I investigated if there was a lighter alternative to report errors.Use the Edge version of @sentry/nextjs

I explored other error reporting libraries but all of them had the same issues.

I dived into the

sentry/nextjs source code and found out that it already has a lighter way to report errors made just for the edge environment (Vercel Edge and Cloudflare Workers).From the code it looks like the edge runtime is compatible with Node.js too, it simply uses

fetch to send errors to Sentry.So we can use the following

next.config.js to replace the Node.js sentry adapter with the edge one.javascriptconst nextConfig = { webpack: (config, { dev, isServer }) => { if (isServer) { config.resolve.alias["@sentry/nextjs"] = require.resolve( "@sentry/nextjs/cjs/edge" ); } return config; }, };

This trick is still experimental and could cause issues in production, use it at your own risk.

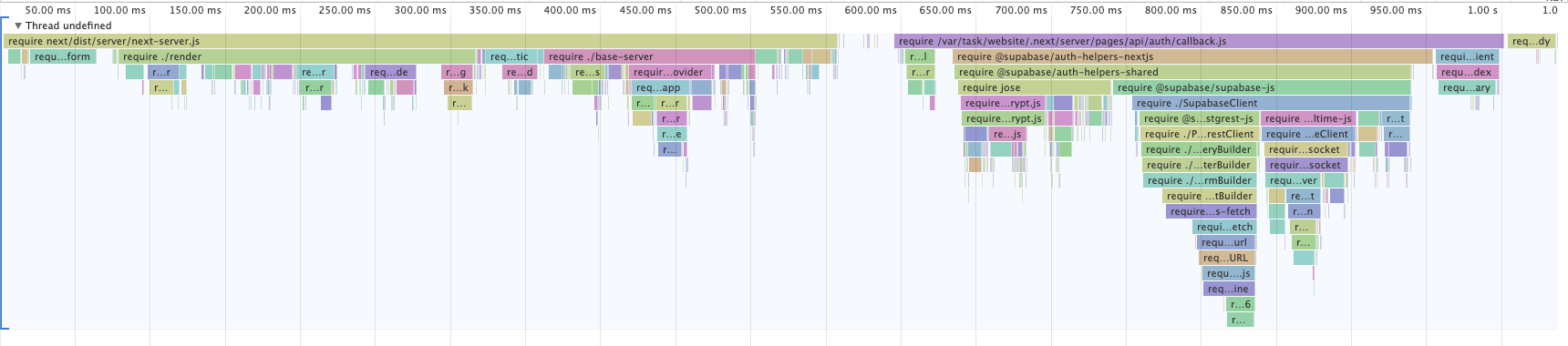

The result of that line change is 400ms shaved off our cold start:

Import from subpaths of your dependencies

Now the function cold start is only one second. The next step would be to optimize your other dependencies.

Sometimes you can import only a subset of your dependency, for example in

date-fns you can only import the function you need from date-fns/format, making requiring it much faster.Unfortunately the slow package in my profile is

@supabase/supabase-js which does not offer this paradigm, you must require the realtime and database modules even if you only need the authentication part.There is some discussion about releasing a tree shakeable version of the supabase client in the 2.0 release, leave your opinion here.

If SEO isn’t important, disable SSR

SSR consists in rendering your Next.js pages to HTML and hydrating them on the client. This process helps you have better SEO and First Contentful Paint time.

But if you are creating a dashboard that cannot be crawled by Google and your cold start time is 4 seconds, SSR isn’t really helping you.

SSR causes your lambda functions to import all React libraries and components used in the page, vastly increasing the function cold start.

Usually React component libraries are very big (with icons libraries being literal black holes), by removing these dependencies from your Next.js functions you can really decrease cold starts Considerably.

Disable SSR with this one little trick (big speedup ⚡️)

I developed a Next.js plugin called elacca that can remove all your client-related code from your lambda functions.

This plugin only works with the pages directory for now

To use it simply add the following in your

next.config.js:javascriptconst { withElacca } = require('elacca') /** @type {import('next').NextConfig} */ const config = {} const elacca = withElacca({}) module.exports = elacca(config)

To disable SSR in your pages you can add the

skip ssr directive at the top of your page files, it works best if you also add it to your _app page and all pages that make use of getServerSideProps:javascript// pages/index.js 'skip ssr' export default function Home() { return <div>hello world</div> }

If you use Next.js for your landing page or SEO-related pages, don’t add

‘skip ssr’ to _app, instead use dynamic to dynamically import any heavy components.This plugin will remove all your React-related code via dead code elimination:

- When a page has a "skip ssr" directive, this plugin will transform the page code so that

- On the server, the page renders a component that returns

null, Next.js will then automatically remove unused imports

- On the client the page component is replaced with one that renders

nulluntil the component mounts, removing the need to hydrate the page

This plugin should vastly decrease the size of your functions.

Here is a before and after

skip ssr of a simple function, as you can see we shaved off all the @nextui-org React components off our profiles, making the function almost 1 second faster.

Sponsors

This blog post is kindly sponsored by myself: Holocron.

If you use markdown at your company and want to make it easier for non-technical team members to write docs, try Holocron!

You can get a Notion-like experience (with real-time collaboration!) while syncing all your docs with GitHub

PS: This blog post was written in Notion and published with Notaku, another one of my projects.

Check that out too if you like Notion.